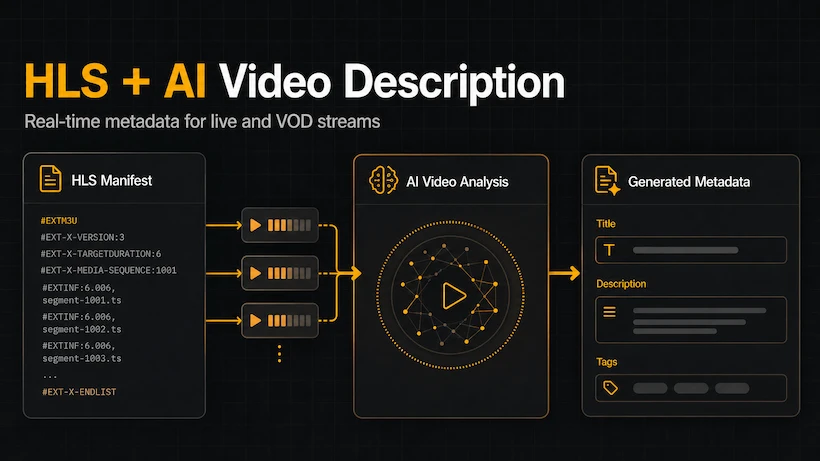

HLS + AI Video Description: Real-Time Metadata for Live and VOD Streams in 2026

HLS is the dominant streaming protocol in 2026. Learn how modern Video Description APIs can generate accurate titles, descriptions, and tags in real time — for both live streams and large VOD libraries — without manual work.

HLS + AI Video Description: Real-Time Metadata for Live and VOD Streams in 2026

HTTP Live Streaming (HLS) has become the default protocol for video delivery in 2026. Whether you're running a live sports platform, a large VOD library, or a user-generated content site, chances are you're already using HLS — or planning to.

But here's the problem most platforms still face:

Generating high-quality titles, descriptions, and tags for HLS content at scale is extremely difficult.

Traditional approaches (manual writing or basic AI tools) break down when dealing with:

- Live streams that never end

- Master playlists with multiple renditions and bitrates

- Thousands of short segments

- The need for real-time metadata (not hours later)

This is exactly why we recently added native HLS support to the Descrideo Video Description API.

Why HLS Changes Everything for Video Metadata

HLS is fundamentally different from simple MP4 files:

- Content is split into many small segments

- A master playlist describes available qualities and resolutions

- Live streams are constantly growing

- Viewers can join at any point

Because of this structure, many AI tools that work fine with regular video files fail or produce poor results with HLS. They either:

- Only analyze the first few segments

- Can't handle live content

- Don't understand the relationship between different renditions

A proper Video Description API built for HLS needs to handle the entire manifest intelligently.

How Descrideo Processes HLS Streams

When you send an HLS URL to the Descrideo API, here's what happens:

- Manifest parsing — We analyze the master playlist and understand all available renditions, resolutions, and codecs.

- Smart segment sampling — Instead of processing every segment (which would be slow and expensive), we intelligently select representative frames across the entire stream.

- Vision + Audio analysis — We combine visual understanding with audio transcription for maximum accuracy.

- Real-time webhook delivery — As soon as metadata is ready, we push it to your system via signed webhooks — no polling required.

This architecture allows us to generate high-quality metadata even for:

- Long-form live streams (concerts, sports, webinars)

- Large VOD libraries with thousands of hours

- Short-form vertical video (Reels, TikTok-style content)

Real-World Use Cases

Live Streaming Platforms

Automatically generate titles and descriptions for live events the moment they go live. No more "Live Stream" as a placeholder title.

VOD Libraries

Process entire catalogs in the background. New uploads get rich metadata within minutes, improving search and recommendations immediately.

User-Generated Content Platforms

Handle unpredictable content at scale. Even if users upload HLS streams in different formats and qualities, the API returns consistent, high-quality metadata.

Accessibility Workflows

Generate WCAG-compliant descriptions for live and archived HLS content — something that was previously extremely expensive to do manually.

Technical Advantages

- Support for master playlists — We automatically choose the best quality for analysis while respecting your bandwidth settings.

- Segment-aware processing — We understand HLS structure and avoid common pitfalls that break other AI tools.

- Webhook-first design — Perfect for modern event-driven architectures.

- Consistent output — Same high quality whether the source is a 30-second clip or a 4-hour live stream.

How to Get Started with HLS + Descrideo

It's simple:

- Send your HLS master playlist URL to the API (just like you would with MP4).

- Choose generation mode (

vision,audio, orvision_audio). - Receive structured metadata via webhook (title, description, tags, and even VideoObject schema).

You can test everything right now in our free sandbox — no credit card required.

Want to see how well AI can describe your HLS content?

Try Descrideo's free sandbox → Test real HLS streams in minutes.

See how other teams are using it: Explore real use cases →

Descrideo is a developer-first Video Description API with native HLS support, combining vision and audio analysis to generate accurate, SEO-ready metadata for both live and on-demand content at scale.